The weather has immense potential to affect every facet of human existence — from personal plans to complex business operations. As climate change drives shifts in weather patterns, the necessity for a more precise, reliable, and comprehensive weather forecasting system is crystal clear.

But what does it take to build such a system? In a world where technology is rapidly evolving, are we still relying on centuries-old methods, or can we harness the power of cutting-edge technology to improve weather forecasts?

This blog will walk through the short history of weather forecasting and explain how the various dynamics have improved over time with advancements in satellites and forecasting models.

Framing the Present: Weather Forecasting Technology

Forecasting when, where, and how intense weather events will unfold is much like solving a puzzle. There has to be coordinated observations of the atmosphere, land, and oceans. Powerful computer systems must be linked to run weather models ranging from global scale to micro-scale. Then the raw numbers from these predictions need to be communicated in an actionable way-the right kind of information, at the right time.

From the latest national impacts report from NOAA, the damage due to severe weather and climate change on the economy is worsening with each passing year. In 2021, the number of billion-dollar weather disasters was nearly three times the annual average over the last four decades. This underscores why it is crucial for countries, businesses, and individuals to have reliable, consistent, and hyper-local weather forecast tools.

At Tomorrow.io, we’re responding to this urgency by accelerating progress toward building a better weather forecast for every location on the planet: collecting high-quality weather observations from dense sensor networks everywhere, running high-resolution, customizable global weather models; in addition to developing a weather intelligence platform and intuitive user interface that provide contextual insights for decision support.

In this tutorial, we’ll share how Tomorrow.io is rethinking and improving the entire weather value chain while providing relevant background along the way.

What is Weather Forecasting?

Weather forecasting is the application of scientific knowledge and technology to predict atmospheric conditions and changes in a specific location over a certain period of time. It involves the collection of data about the state of the atmosphere at a given place and time, and the understanding and application of meteorological principles to predict how the weather will change in that location.

Essentially, it’s about interpreting the intricate dance between various atmospheric elements, including temperature, humidity, wind velocity and direction, atmospheric pressure, and precipitation. These elements are tracked, measured, and analyzed to generate a weather forecast.

As we progress, the science of weather forecasting is rapidly evolving. Traditional methods are being complemented by innovative techniques and technologies, such as the use of artificial intelligence and machine learning algorithms, advanced computing, and improved data collection through satellites and IoT devices, leading to more precise and reliable forecasts. The result? We now have weather AI and weather intelligence tools at our disposal to improve the forecasting process.

The Advent of Modern National Weather Forecast

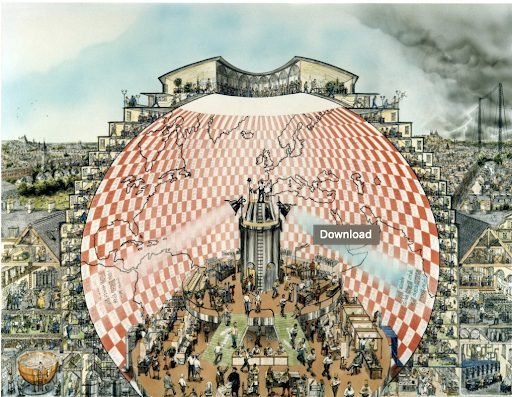

Effective approaches to predict the weather were historically slow to materialize. Early visions of weather forecasts entailed situating thousands of people in an amphitheater, who were each responsible for a small piece of the puzzle. Forecasters would call out their predictions in pre-arranged sequence until the big picture of impending weather would emerge; but combining many small parts to make a single weather forecast proved insurmountably difficult at the time.

Then came Gordon Moore (e.g., “Moore’s Law” of computing), who presciently recognized how computers were rapidly coming of age. Using more powerful computers, savvy meteorologists abandoned attempting to endlessly refine individual forecasts. Rather, they ran many, many forecasts encompassing different possibilities together. The thought was that greater forecast accuracy could be achieved through finding a consensus of many weather predictions run together.

Now as before, the fundamental science principles hold and computer technology continues to advance at break-neck pace. Forecasters have more data than ever before at their disposal. But what actually contributes to an accurate weather forecast in the first place?

Peering Inside the Blackbox of Weather Forecasting

There is a certain, often-repeated phrase in the weather community when it comes to forecasting…”garbage in, garbage out”. For one, you can go through great pains to have a physically realistic, comprehensive weather model running on state-of-the-art computers. But if you feed the weather model with sparse and infrequent data, then there is no way that the model will produce a quality weather forecast.

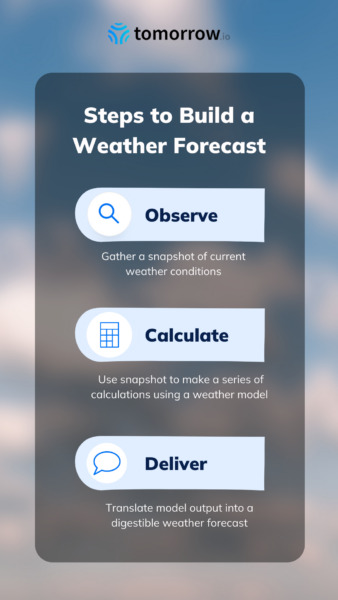

To explain why that is, we need to know more about how a weather forecast is created:

Observe: First, one needs abundant observations of the atmosphere, land, and ocean together. The goal is to get a complete initial snapshot of current weather conditions. After that, the observations must be intelligently blended with a weather model’s preliminary “best guess” at what the atmosphere looks like. With more and more observations, there are fewer gaps and smaller errors in the initial global view of the weather.

Calculate: “Running the weather model” is really just using the aforementioned initial snapshot to make a series of calculations based on mathematical equations of the atmosphere. Once finished, the weather model produces geolocated and time-stamped numbers-many, many numbers. Rather than relay every weather datapoint, there are additional calculations to further refine (or “post-process”) the output. And customarily, a quality assurance check is done routinely, comparing the forecast to the actual weather at a representative group of locations.

Deliver: It is then up to the forecaster to use their expertise to translate those numbers into a digestible weather forecast. This might mean adjusting the temperatures lower over areas with recent snowfall, reducing rainfall totals downwind of the mountains, or enhancing winds where terrain can funnel the flow from preferred directions. Much of this can be automated, and it usually happens behind the scenes. The insights that eventually get delivered are thoughtfully contextualized for each industry and hyper-localized to certain assets or geographic areas.

It seems simple enough: observe, calculate, and deliver. But there is more to the story. Businesses need more to adjust their operations based on the weather. They require neat web-integrated platforms to get information with minimal delay. Companies will iterate on graphical user interfaces, near-real time updates, and a bevy of machine learning solutions for connecting the dots between weather and a myriad of enterprise use cases. Like the weather forecast, it is a cycle of development…

With all that background in mind, it’s no surprise that the weather forecasting puzzle remains somewhat incomplete. The science and method of weather forecasting have greatly matured to this point, and yet there is still the occasional “miss”. We continually return to the notion of observations being a likely culprit, especially since weather models need quality observations to make quality weather forecasts.

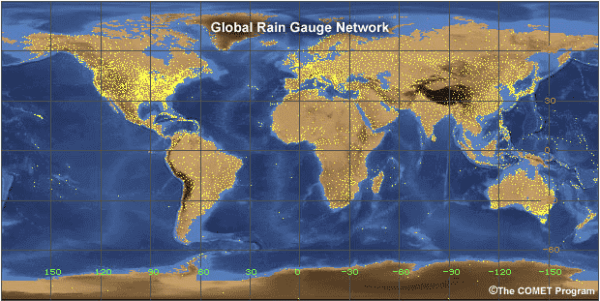

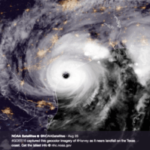

A Weather Forecasting Revolution – Tomorrow.io’s Satellites

As mentioned above, effective observation strategies address land, sea, and sky. But the weather enterprise as a whole has historically grappled with having insufficient numbers of observations to cover millions of square miles on Earth’s surface and the atmosphere above it. And some of the most meteorologically important locations-the African Sahel where hurricanes are seeded or the Gulf Stream over which intense Nor’easters develop-are the most difficult to reach and monitor in detail. There’s also a secondary issue of unforeseen disruptions to the stream of weather observations: floods inundating ground observation stations, buoys going randomly adrift, or weather sensors malfunctioning. Trying to then make a skillful weather forecast without those crucial observations is like starting out on a multi-day road trip entirely in the wrong direction.

If left unchecked, small errors observing the weather cascade into big operational mistakes because every natural system on Earth is so connected. That’s why scientists endeavoring to improve national weather forecasts turned their gaze upward toward space; they wanted to see if they could get more comprehensive observations from above.

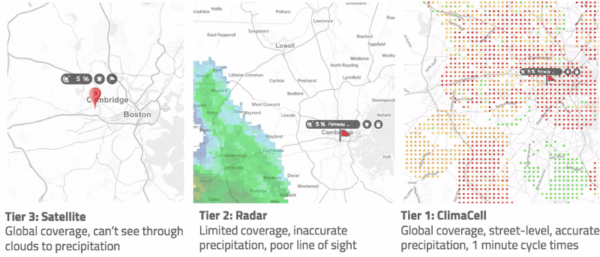

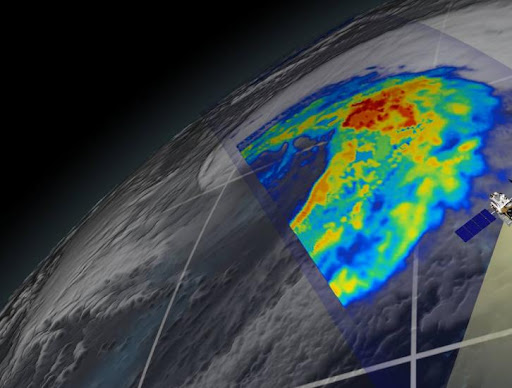

In April of 1960, NASA launched the first weather satellite to capture the equivalent of a grainy polaroid approximately every half hour. The next satellites to launch in the 1970s and 1980s were gargantuan instruments the size of greyhound buses, which gave us wide-angle views of the entire Earth. But they were positioned far away and still produced relatively low-resolution imagery. Next came putting satellites into lower orbits to get higher quality images, yet at the expense of global coverage. Fast forward through a couple of generations of hardcore R&D and innovation, during which time camera technology improved many-fold. National weather agencies launched high-resolution satellite sensors in very low-altitude orbit, just 300-500 miles above ground. These could get us granular images of individual clouds and thunderstorms, which is a big deal since high-resolution weather models need that kind of detail to run effectively.

And so scientists have figured out how to get weather observations over the entire Earth from very far away OR very high-resolution images over a small area. Until the 2010s, there was no way to get weather observations from space that were both high-resolution in detail and global in coverage. Moreover, we are only talking about images to this point-not about the underlying data. By data, we mean vertical slices through clouds and distinguishing snow, rain, sleet, or even hail with precision. The cutting edge of weather observations from space is therefore to achieve global data coverage that describes where and how tall individual storms are, plus how quickly they are moving.

After recent breakthroughs in engineering, weather satellites that can do that now fit in packages as small as a shoebox (i.e., “cubesats” or “smallsats”). Compared to previous generations of satellites, these cubesats are miniature, weighing appreciably less, and they need only a fraction of the battery power for the improved sensors they carry. We can literally launch whole swarms of these cubesats into operational orbit for less than the cost of a single legacy satellite. And by elegantly choreographing the positions of cubesats in orbit, it is possible to get very-high-resolution observations of clouds, hail, rain, and snow almost everywhere. Moreover, with approximately 30 smallsats in the operational constellation, it will be possible to revisit any location on Earth every hour or less.

Some of the best, brightest atmospheric scientists and engineers are working at Tomorrow.io to bring commercial small-satellite solutions to reality. The near-real-time “swarm” observation strategy will effectively eliminate the outstanding gaps in global weather data. The question then becomes, what do we do with such valuable weather data?

Leveraging An Unprecedented Stream of Hi-Res Weather

Tomorrow.io’s weather and climate security platform can easily handle the terabytes of data that a cubesat constellation generates each day. Our space and sensors team designed our data processing systems purposely to work best on the fastest cloud-based computers for scalability, redundancy, and resilience. We already optimize for merging these types of dense observations with weather model data. And we run our calculations continuously better than 99.9% of the time.

With the underlying computer systems humming along, reliably and true, Tomorrow.io is able to focus the majority of our efforts on the weather forecast. We sift through publicly available global weather models and apply our own proprietary high-resolution model to tailor the forecast to our customers’ needs. Applying the traditional forecast process, we are able to resolve the weather down to individual city blocks, such as representing the turbulent winds that flow past individual buildings or on final approach to a busy airport.

Practically speaking, we populate nationwide and even global weather forecasts out to multiple days ahead, all in as little as a couple of minutes. Contrast that with conventional weather models that update just once every couple of hours. Also as an added benefit, our cloud-native weather engine enables us to efficiently package and subsequently archive weather analyses for recall on-demand.

And with the release of our Historical Weather API, we now have access to seven years of high-resolution historical weather data. Customers across all industries are already benefiting from mining our data. They spend less time reacting to intense weather and more time preparing and developing sound business adaptation strategies; with contextualized and hyper-localized insight, weather impacts do not escalate into full-blown disasters.

Delivering Tomorrow’s Weather and Climate Security, Today

Here at Tomorrow.io, we know that businesses that care about the climate will also care profoundly about the weather. The reason is because local weather is the sum of a constantly changing climate. And as recent history has shown, winter storms, hurricanes, heat waves, and tornado outbreaks can strike quickly over large areas. To meet these challenges, we deliver the highest resolution weather forecast possible and enable teams to avoid disruptions that can result from short-fuse weather impacts nationwide.

We build our end-to-end weather solutions by following the simple core foundation, that is, observe, calculate, deliver. We are reimagining the weather forecast by:

- Efficiently gathering weather observations from dense ground networks, public satellites, and soon from our own proprietary satellite constellation

- Running an extensive suite of rapid-update computer weather models, from global to hyper-local scale, every few minutes

- Transforming the forecast into actionable insights for businesses in critical economic sectors

Being on the verge of launching an entirely new constellation of precision-weather small satellites to space in the coming years is an extraordinary prospect. We are excited to achieve substantial enhancements to our weather observation stream in the form of precision global weather data every hour or less. And with that boost in observations from orbit, Tomorrow.io will realize significant improvements in both forecast accuracy and contextualized weather insights for decision-making. Tomorrow.io is the world’s first weather and climate security platform, and with it, your business can take control of tomorrow today.